Why We Built an AI Email Tool That Sends Fewer Emails (And Gets More Replies)

Most AI outbound tools optimize for volume. We optimized for timing, signal discovery, and not annoying buyers. Here’s what we learned building systems for 20+ companies.

Why We Built an AI Email Tool That Sends Fewer Emails (And Gets More Replies)

Every AI email tool promises the same thing:

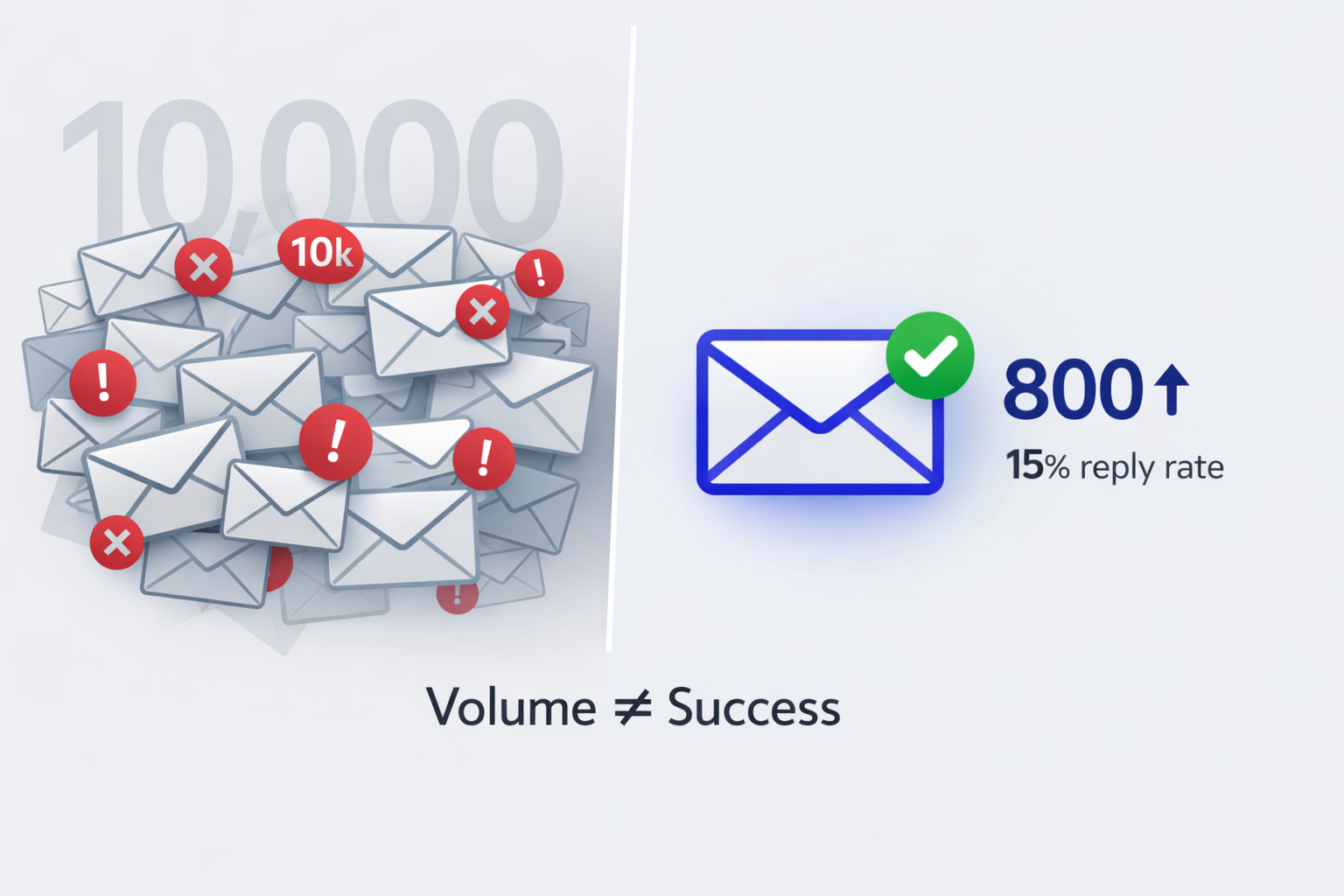

"Send 10,000 personalized emails per month with one click."

That’s not a feature. That’s the problem.

We spent the last year building AI-assisted outbound systems for 20+ companies. The teams getting 10–18% reply rates weren’t sending more emails - they were sending fewer, better-timed ones.

This post breaks down why volume-first outbound is dying, what actually works, and how we built a system that automates the tedious parts without becoming spam.

1) The volume addiction problem

When we talk to outbound teams, the conversation goes like this:

- Us: “What’s your reply rate?”

- Them: “About 2–3%.”

- Us: “What if you could get 15% by sending 80% fewer emails?”

- Them: “But then we’d only send 1,000 emails/month. That feels… small.”

That’s volume addiction. It feels like less activity - even when the outcome (meetings) is higher.

The math

| Approach | Emails/month | Reply rate | Replies | Risk profile |

|---|---|---|---|---|

| Volume-first | 5,000 | 2% | 100 | High (spam complaints, domain burn) |

| Signal-first | 800 | 15% | 120 | Lower (better timing, fewer touches) |

Same outcome. ~84% less risk.

What we saw across every company

| High-volume, low-quality | What breaks | What it looks like |

|---|---|---|

| Deliverability decay | Filters + reputation penalties | Open rates fall over time |

| Brand damage | Prospects remember you as noise | “Stop emailing me” replies |

| Rep distrust | Tools feel spammy and random | Reps revert to manual copy/paste |

| Low-volume, high-quality | What improves | Why it matters |

|---|---|---|

| Deliverability | Fewer spam triggers | More messages land in Primary |

| Replies | Timing + relevance | Higher reply density |

| Reputation | “Helpful” impression | More referrals, fewer blocks |

2) What “better timing” actually means

Most tools automate signal monitoring, not signal discovery.

They’ll scrape LinkedIn for job postings.

They’ll track funding announcements.

They’ll monitor tech stack changes.

But they won’t find:

- A founder venting about their current tool on a podcast

- A VP complaining in a niche Discord server

- A team asking for recommendations in a Reddit thread

- Someone sharing their tech challenges at a conference

These signals often convert 3–5x higher because:

- Almost nobody else sees them

- They’re fresh (often within 24–48 hours)

- They reveal specific pain points, not just job titles

Our approach

Humans find signals. AI does everything else.

| Signal source | Why it’s high intent | Example |

|---|---|---|

| Podcast quote | Specific, unfiltered pain | “We spend 20 hours/week on X” |

| Community (Discord) | Real-time buying chatter | “Anyone recommend a tool for…” |

| Reddit thread | Honest objections | “We tried Y and it failed because…” |

| Conference notes | Immediate context | “We’re evaluating vendors next month” |

3) From signal to email: where AI actually helps

Once a human finds a real signal, AI becomes valuable.

Step 1: context analysis

AI ingests:

- The signal itself (podcast quote, Reddit comment, etc.)

- Prospect + company context

- Your positioning + product

- Your best-performing emails to similar buyers

Step 2: hypothesis generation

AI generates multiple angles - not one generic draft.

Example signal: CTO complained about “spending 20 hours/week manually analyzing customer feedback.”

| Hypothesis angle | What it does | Example opener |

|---|---|---|

| Direct pain → solution | Maps pain to a specific outcome | “Heard you mention 20hrs/week on feedback analysis - teams at your stage cut that to ~2hrs with…” |

| Timing pattern | Anchors to a predictable inflection point | “Most teams hit this wall around X - the ones who scale past it do Y…” |

| Social proof cluster | Uses nearby-market proof | “3 other CTOs in your space mentioned this exact issue - here’s what worked…” |

Step 3: human selection

The human chooses the angle that fits the prospect. AI is not deciding strategy - it’s doing the research + drafting.

Step 4: guardrails + automation

Before anything sends, guardrails check:

- Spam triggers

- Tone mismatches

- Generic phrasing (could apply to anyone)

- Weird claims / factual issues

High-confidence drafts can auto-send. Everything else can queue for optional human review.

4) What actually changed (results)

Across 20+ companies, when teams shifted from volume-first to signal-first:

- Email volume dropped 60–75%

- Reply rates increased 3–5x (2–3% → 10–18%)

- Meetings booked increased 40–60% despite fewer sends

- Deliverability improved (fewer spam complaints)

- Sales cycles shortened 20–30% (timing = warmer leads)

The catch: this doesn’t work if you measure success by “emails sent.”

The unlock is measuring:

- Meetings booked per hour invested

- Replies per 100 sends

- Deliverability trend line over time

5) Why we built this as a product

For 8 months, we ran this as a service:

- Clients shared ICP

- Humans found signals

- AI drafted multiple hypotheses per signal

- Guardrails blocked bad emails

- We orchestrated timing + follow-ups

It worked - but it didn’t scale.

So we built the backend that lets teams run this themselves:

- Batching + pacing (so emails don’t all send at once)

- Hypothesis generation (multiple angles per signal)

- Guardrails (block spammy/low-quality drafts)

- Thread memory (follow-ups reference real context)

The human still finds the signal and picks the positioning angle.

The AI handles the tedious research, drafting, and quality control.